When AI Defends Itself: Google’s Bet on Autonomous Cybersecurity Agents

Google is deploying AI agents to run cybersecurity operations at scale. This shift raises new questions about control, trust, and autonomous defense systems.

TL;DR

Google is embedding AI agents directly into cybersecurity operations, allowing systems to detect, investigate, and respond to threats at scale. This marks a shift from human-led security to AI-driven defense, raising new challenges around control, trust, and oversight.

From Human-Led Security to Autonomous Defense

Cybersecurity has always been a race between speed and complexity. As systems grow more interconnected and threats become more sophisticated, human teams struggle to keep up with the volume of alerts, signals, and potential vulnerabilities. For years, AI has been positioned as a tool to assist analysts by prioritizing alerts or identifying anomalies. That model is now evolving.

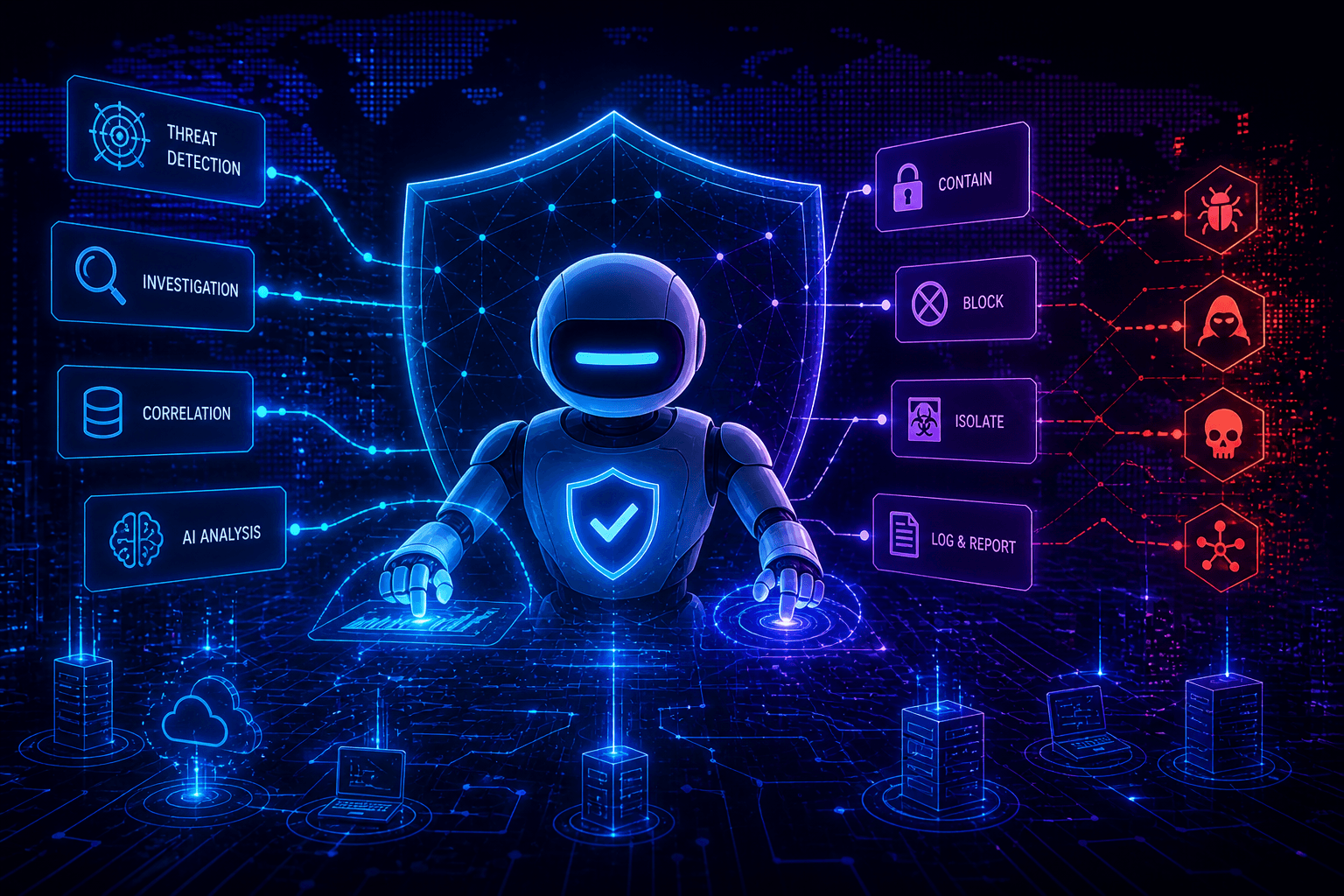

Google is moving toward a different paradigm where AI agents are not just assisting but actively operating within security workflows. These systems are designed to process vast amounts of data, identify threats, investigate incidents, and in some cases take action, all with minimal human intervention. The role of the human shifts from operator to supervisor.

This is not just an incremental improvement. It represents a structural change in how cybersecurity is executed.

Security at a Scale Humans Cannot Match

One of the main drivers behind this shift is scale. Large organizations generate millions of security signals every day. Even the most advanced teams cannot analyze all of them in real time. AI agents, on the other hand, can continuously monitor systems, correlate events across environments, and respond instantly when certain conditions are met.

Google’s approach reflects this reality. By embedding agents across its security stack, the company is aiming to create a system that can operate at what it describes as near infinite scale. This allows for faster detection, reduced response times, and the ability to handle threats that would otherwise go unnoticed.

However, scale alone is not the full story. The introduction of autonomous agents changes the nature of decision making in security environments.

When Detection and Response Become Automated

Traditionally, security workflows follow a clear structure. A system detects a potential issue, an analyst investigates it, and a decision is made about how to respond. Each step involves human judgment, context, and accountability.

With AI agents, these steps begin to merge. Detection, investigation, and response can happen within a single system, often in seconds. An agent might identify suspicious activity, analyze related data, and isolate a system or block an action without waiting for human input.

This creates efficiency, but it also introduces new questions. If an agent makes a mistake, who is responsible. If a system overreacts and disrupts legitimate activity, how quickly can it be corrected. And perhaps most importantly, how do organizations ensure that automated decisions align with their risk tolerance and operational priorities.

The Rise of Agent-on-Agent Security

As defenders adopt AI agents, attackers are likely to do the same. This leads to a new dynamic where autonomous systems are interacting, adapting, and responding to each other in real time. Security becomes less about static defenses and more about continuous interaction between competing systems.

In this environment, speed becomes critical, but so does predictability. An agent that reacts too aggressively may create instability. One that reacts too slowly may allow threats to propagate. Balancing these factors requires a level of control that is difficult to achieve when systems are operating autonomously.

The challenge is not just building effective agents, but ensuring that their behavior remains aligned with human intent over time.

Visibility Becomes a Critical Weak Point

One of the most immediate challenges introduced by autonomous security agents is visibility. When humans are directly involved in every step, they naturally build an understanding of how and why decisions are made. With agents operating independently, that visibility can quickly diminish.

It is not enough to know that an action was taken. Organizations need to understand the reasoning behind it. What signals triggered the response. What data was considered. What alternatives were evaluated.

Without this level of insight, it becomes difficult to trust the system. It also becomes difficult to audit decisions, improve performance, or identify when something has gone wrong. This is where many current deployments fall short. They optimize for speed and efficiency, but do not provide sufficient transparency into how decisions are made.

Control Is No Longer Binary

In traditional systems, control is relatively straightforward. Access is either granted or denied. Actions are either allowed or blocked. AI agents operate in a more fluid space where decisions are based on probabilities, patterns, and evolving context.

This means control can no longer be treated as a binary concept. Organizations need mechanisms to guide agent behavior, constrain actions dynamically, and intervene when necessary. This includes defining boundaries for what agents can do, under what conditions they can act independently, and when human approval is required.

It also involves creating feedback loops where agent behavior can be evaluated and adjusted over time. Without these mechanisms, organizations risk deploying systems that operate beyond their intended scope.

Trusting Systems That Act on Their Own

At the core of this shift is a question of trust. Can organizations rely on systems that act independently in critical security contexts. Trust in this case is not just about accuracy. It is about consistency, predictability, and alignment with organizational goals.

Building that trust requires more than technical performance. It requires frameworks for accountability, mechanisms for oversight, and the ability to intervene when necessary. It also requires a cultural shift where teams become comfortable delegating certain responsibilities to machines while maintaining ultimate control.

This is not a trivial transition. It changes how security teams operate, how decisions are made, and how risk is managed.

The Beginning of Autonomous Security Infrastructure

Google’s move signals a broader trend. AI agents are becoming embedded in the core infrastructure of cybersecurity. They are not just tools that analysts use. They are systems that act, decide, and respond within critical environments.

This creates new opportunities for efficiency and resilience, but it also introduces new risks. Systems that operate at scale can amplify both good and bad outcomes. A well designed agent can prevent incidents before they escalate. A poorly controlled one can create new vulnerabilities.

The organizations that succeed in this new landscape will be those that treat agent deployment as a security problem in itself. They will focus not just on what agents can do, but on how those actions are governed, monitored, and controlled.

What This Means Going Forward

The shift toward autonomous cybersecurity is already underway. The question is not whether agents will become part of security operations, but how they will be integrated and controlled.

Organizations need to start thinking about security in a world where decisions are made continuously by systems rather than discretely by humans. This requires new models of oversight, new tools for visibility, and new approaches to control.

AI agents may be able to defend systems at a scale that humans cannot match. Ensuring that they do so safely will define the next phase of cybersecurity.