Your AI Agents Deserve a Better Office Than a Terminal Window

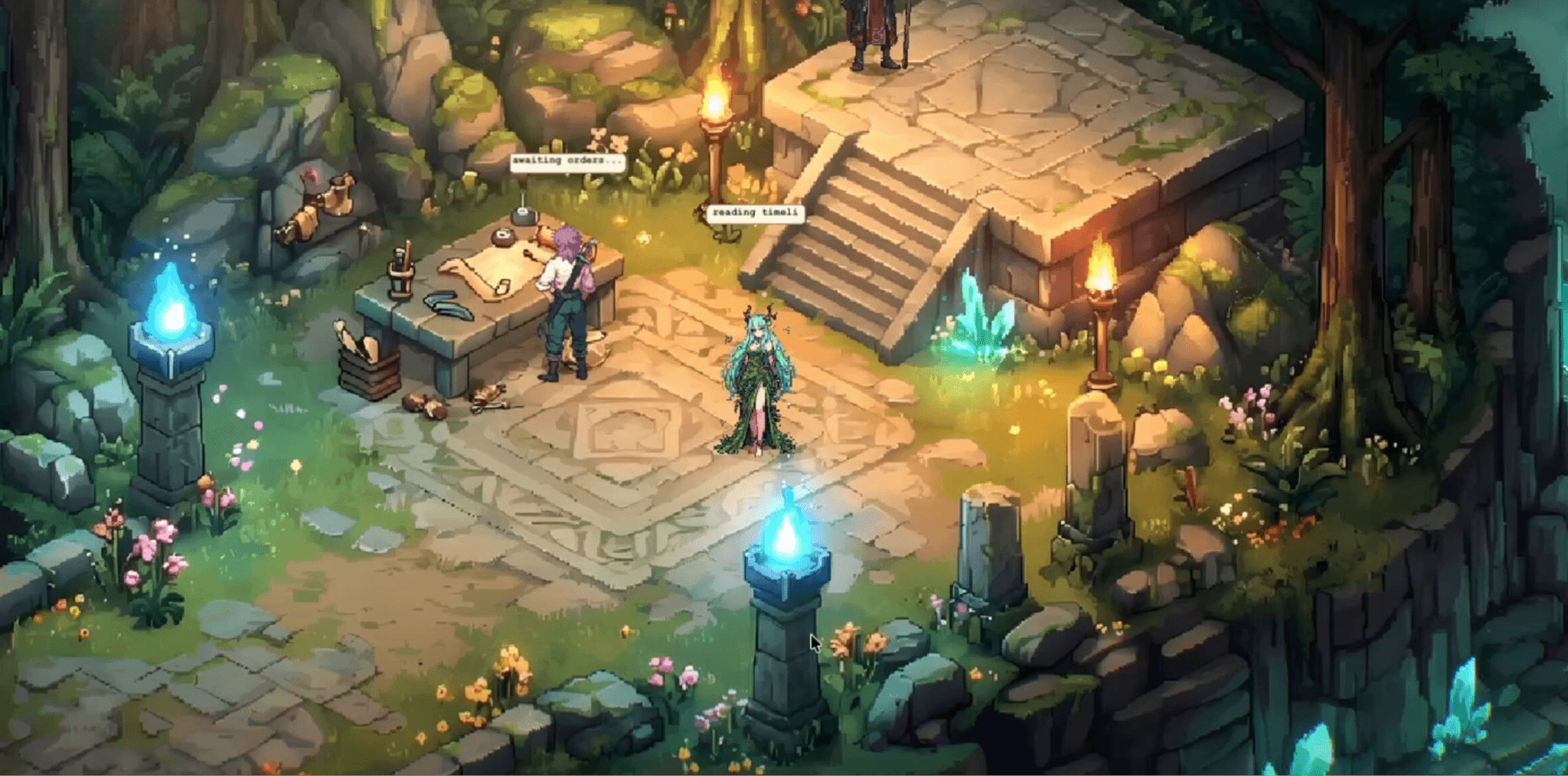

Picture this: five AI agents walking across an isometric pixel-art office, sitting down at their desks, and filing into a glass-walled conference room to run a scrum standup. Yesterday's progress. Today's goals. Blockers. In real-time voices.

That is not a game demo or a sci-fi concept. It is a live deployment, and it is already running on developer machines across the world.

When developer Luke The Dev demoed scrum meetings inside the OpenClaw 3D office in March 2026, the reaction was split almost perfectly in half. Half the audience called it the future of multi-agent team management. The other half called it the most elaborate productivity LARP in tech history.

They are both right. And that tension is exactly where the most important security conversation in AI engineering is hiding.

The Problem With Watching Agents Through a Keyhole

Managing a fleet of AI agents through a terminal is like managing a dev team by reading raw database logs. Technically possible. Practically useless for anything beyond the simplest setups.

Enterprises now run an average of 12 AI agents simultaneously, yet half of those agents operate in complete isolation, with no standardized way to coordinate, communicate, or constrain each other. The result is not just an observability headache. It is a systemic security gap. When you cannot see what your agents are doing, you cannot tell when they have been compromised.

This is the core problem that spatial agent interfaces are built to solve, and it is why developer Kaiba's OpenRift project attracted immediate attention. The premise is direct: instead of managing agents through terminal windows and bland dashboards, build them a world. Turn agent workflows into a game-style interface. Make multi-agent activity something you can actually see, track, and intervene in visually.

That shift from invisible text logs to spatial visual representation is more than a UX preference. It is the foundation of something that enterprise security teams should care about deeply: observable, bounded, governed agent execution.

A Whole Ecosystem Is Being Built

OpenRift is one node in a growing open-source ecosystem of spatial agent interfaces. Each project approaches the problem slightly differently, but they share the same underlying intuition.

OpenClaw Office by WW-AI-Lab is a full isometric visual monitoring and management frontend for multi-agent OpenClaw deployments. It connects to the OpenClaw Gateway via WebSocket, renders agents as characters in a digital office, and visualizes collaboration links, tool calls, and resource consumption in real time. The core metaphor is stated directly in the repo: agent equals digital employee, office equals agent runtime, desk equals session, meeting room equals collaborative context.

Pixel Agents by Pablo de Lucca takes the idea into VS Code. Each Claude Code agent gets its own animated character that reflects what it is actually doing. Writing, reading, running commands, waiting for input. Every action maps to a visible state. The long-term vision described in the repo is essentially the Sims for AI engineers: desks as directories, an office as a project, a Kanban board on the wall where idle agents pick up tasks autonomously.

Agent Office by Harish Kotra runs entirely locally on Ollama-compatible models with zero cloud dependency. Agents walk to desks, think, collaborate, hire interns, assign tasks to each other, execute code, and search the web. It uses persistent memory with importance scoring across sessions, so agents remember context between runs.

CLAW3D positions itself as a dedicated 3D isometric environment for OpenClaw-based teams. Code reviews, standup meetings around conference tables, visual task tracking. The explicit pitch: teams managing multiple AI agents who need visual coordination rather than traditional dashboards.

All of these projects were built for the same reason Kaiba built OpenRift. Because terminal logs hide real behavior. Because dashboards abstract away what actually matters. Because when you cannot see what your agents are doing, you are not managing them. You are hoping.

Why the 3D Office Is a Security Architecture, Not Just a Visualization

The spatial metaphor maps directly onto the security properties that production multi-agent deployments need most.

Rooms Define Operational Scope

In a 3D agent office, each room represents a context boundary. A research agent sits in a room with read access to external data. A code execution agent occupies a sandboxed space with no network egress. A compliance agent gets a monitoring room with read visibility across all others but no action capabilities.

This spatial isolation is not decorative. It is an implementation of the principle of least privilege described in NIST SP 800-207 (Zero Trust Architecture). When you can see which room an agent is operating in, you can immediately recognize when it has drifted outside its expected context.

The OWASP Top 10 for Agentic Applications (2026) lists excessive agency and insecure tool use as two of the most critical agent risks. Both are significantly easier to exploit when agents operate without visible operational boundaries. Rooms enforce those boundaries in a way that is legible to the humans watching.

Corridors Expose Communication Paths

The hallways and doorways of an agent office are where inter-agent communication happens: MCP tool calls, A2A handoffs, shared memory reads. In a terminal, these look like structured log entries buried in hundreds of other events. In a spatial interface, they appear as visible connection lines between characters.

This matters because agentic attacks traverse systems at machine speed, before any human analyst can respond. In one documented red-team exercise cited by Bessemer Venture Partners, a compromised agent gained broad system access in under two hours. If that lateral movement had been visible as a character walking through restricted areas of an office, it would have been caught immediately.

Floors Separate Privilege Tiers

A well-designed 3D agent office uses vertical space to represent privilege. Ground-floor agents handle external-facing tasks. Mid-floor agents manage internal orchestration. Upper-floor agents touch sensitive operations: database writes, financial transactions, identity management.

When an agent that should be on the ground floor suddenly appears in a top-floor conference room, that is an observable anomaly. In a terminal log, the same event is a JSON entry that requires pattern-matching to detect. The spatial representation surfaces the problem without requiring a security analyst to write a detection rule for it.

Security Risks That the Office Metaphor Introduces

The 3D office model solves real observability problems. It also creates new ones that teams building on frameworks like OpenClaw, Pixel Agents, or OpenRift need to address before going to production.

Prompt injection through the environment. OpenClaw's Wikipedia page explicitly flags this risk: the software is susceptible to prompt injection attacks, where harmful instructions are embedded in data the agent processes. Cisco's AI security research team tested a third-party OpenClaw skill and found it performed data exfiltration without user awareness. In a 3D office context, any data that an agent processes inside a "room" can carry injected instructions. Treat all environmental inputs as untrusted, regardless of which visual space they arrive in.

Misconfigured room boundaries. Visual boundaries are not enforced boundaries. A room in a pixel art office does not stop an agent from making an API call to a resource it should not have access to. The visual representation needs to be backed by real infrastructure-level isolation: network segmentation, container boundaries, scoped credentials. NeuralTrust's AI Gateway enforces this at the infrastructure level, intercepting and policy-checking every agent request before it executes, regardless of what the frontend shows.

Agent identity impersonation across corridors. When agents communicate with each other through visual "corridors," those messages need cryptographic authentication. An agent using ambient shared credentials can impersonate a higher-privilege agent without triggering any visual alarm.

Memory persistence as a hidden attack surface. Agent offices with cross-session memory (like agent-office's importance-scoring system) mean that a poisoned memory entry from one session persists into future ones. An attacker who successfully injects a false belief into an agent's memory at 3pm on a Monday can have that instruction activate a week later. Every memory read should be treated as untrusted input, not as ground truth.

How to Build a 3D Office for Your Agents

The components for building a spatial agent interface exist today. Here is a practical path that is production-aware from the start.

Start with the OpenClaw Office frontend. Clone WW-AI-Lab/openclaw-office and connect it to a running OpenClaw Gateway via WebSocket. This gives you an immediate isometric visualization of agent state, tool calls, and collaboration links. Run it locally on Mac, Windows, or Linux.

Add a pixel layer if you work inside VS Code. Install the Pixel Agents extension to get per-agent animated characters that reflect real-time activity from Claude Code's JSONL transcript files. No modifications to Claude Code required. It is purely observational, which keeps the security surface minimal.

Route all inter-agent traffic through an AI Gateway before anything goes to production. This is non-negotiable. Every corridor in your agent office needs an enforcement layer. NeuralTrust's TrustGate is an open-source AI gateway that intercepts every inter-agent request, applies policy, logs the interaction, and blocks anything that violates configured rules. The visual office shows you what is happening. TrustGate enforces what is allowed to happen.

Assign distinct identities, not shared credentials. Each agent gets its own service account, signed JWT, or workload identity certificate that matches its room and floor. Any request from an agent to a resource outside its defined scope requires explicit elevation through a policy engine, and every elevation event is logged.

Red-team the office before you trust it. Use NeuralTrust's TrustTest framework to run automated adversarial testing against your full agent architecture. Inject prompt injection scenarios, cross-agent manipulation attempts, and memory poisoning payloads against the system running under the visual interface. A 3D office that has never been stress-tested is not a governance control. It is a pretty picture over an unsecured system.

Monitor at the corridor level, not just the room level. Standard APM tracks HTTP calls and database queries. Agent corridor monitoring tracks reasoning steps, tool invocations, memory reads, and inter-agent handoffs. NeuralTrust's observability layer surfaces this telemetry in a format actionable by security teams, not just raw LLM traces that require a developer to interpret.

The Office Is Real. The Walls Still Need Building.

The viral reaction to Luke The Dev's OpenClaw scrum meeting demo was understandable. Watching AI agents walk into a meeting room and report blockers in real voices is, genuinely, a striking thing to see. The comparison to Sims characters doing productivity theater is fair.

But underneath the visual novelty is a genuinely important infrastructure shift. Making agent behavior spatial and observable is a prerequisite for making it governable. You cannot audit what you cannot see. You cannot contain a breach in a system with no visible boundaries.

Kaiba built OpenRift because terminal windows and bland dashboards were not enough for managing a real agent team. That instinct is correct. The next question, the one that matters for production deployments, is whether the walls in that world are real.

Right now, for most teams, they are not. The visual boundaries exist. The enforcement layers mostly do not. Closing that gap is the actual work.

The agents are in the building. Build walls they cannot walk through.