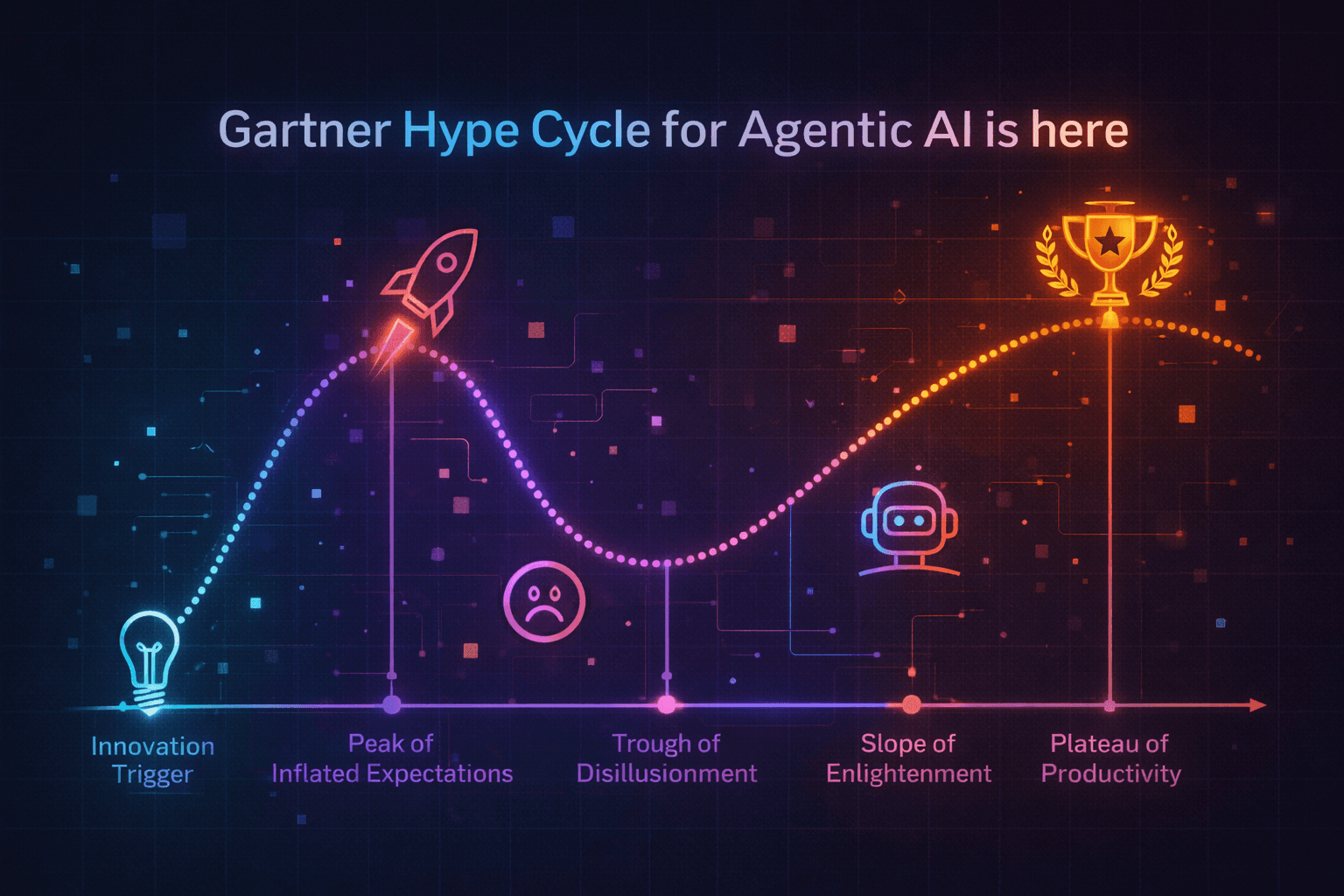

Gartner Hype Cycle for Agentic AI is here

Enterprises are deploying AI agents at speed. The governance and security infrastructure to match it is not keeping up. That gap is where the real risk lives.

Something has shifted in the last six months. AI agents have gone from pilot curiosity to active deployment roadmap across enterprise security, engineering, and operations teams. The conversations we are having with CISOs and AI leaders are no longer about whether to deploy agents. They are about how fast, how many, and what could go wrong.

Gartner recently dedicated an entire Hype Cycle to mapping the agentic AI landscape, a signal that this is no longer emerging technology. It is arriving. And the more we look at what enterprises are actually building, the clearer the problem becomes: the deployment curve is outrunning the security and governance curve by a significant margin.

That is not a reason to slow down. It is a reason to build the right foundations now, before scale makes the gaps irreversible.

Three security gaps that matter right now

When you look at where enterprises are struggling, three problems come up consistently.

MCP is expanding the attack surface faster than most teams realize.

Model Context Protocol has become the de facto standard for connecting AI agents to tools, data sources, and external systems. Adoption has been extraordinarily fast. But most MCP deployments we see are ungoverned: no centralized policy enforcement, no authentication controls beyond shared API keys, no visibility into what agents are actually calling and when. Every MCP server without proper governance is an entry point. Tool poisoning, unauthorized cross-agent delegation, and credential abuse are not hypothetical threats in this architecture. They are the natural consequence of moving fast without guardrails.

Agent identity is an unsolved problem in most organizations.

Traditional identity and access management was built for humans and, to some extent, service accounts. AI agents are neither. They are long-running, nondeterministic, capable of chaining tool calls across systems, and often operating without a clear owner. Most enterprises deploying agents today cannot answer basic questions: which agent accessed which system, with whose credentials, and what did it do? Without that foundation, security and compliance are guesswork.

Human oversight cannot scale to match agent volume.

This is the core tension in agentic AI security. The value of agents comes from their ability to operate autonomously across complex workflows. But the security model most organizations have today relies on humans reviewing agent behavior after the fact, or approving individual actions in real time. At the velocity and volume agents will operate, that model breaks. Automated runtime controls are not a nice-to-have. They are the only architecture that works at scale.

The organizations building agent governance infrastructure now will have a structural advantage over those treating it as a cleanup project later. The gap compounds over time.

What the security layer actually needs to cover

Based on what we are seeing across enterprise deployments, a mature agentic AI security posture needs to address six things:

→ Zero trust for agents, treating every agent as an untrusted nonhuman identity. Every tool call, every data access, every action requires authentication and authorization, not inherited trust from the deploying system.

→ Centralized MCP governance. A gateway layer that makes MCP access observable, auditable, and policy-controlled, before agents proliferate across the organization.

→ Runtime monitoring with real-time visibility into agent behavior, reasoning traces, and tool call chains. Not logs you review the next day. Signals you can act on in the moment.

→ Human-in-the-loop controls for high-stakes actions. Sensitive data access and critical transactions should require explicit approval before execution, not after.

→ A complete agent inventory. Sanctioned and unsanctioned. You cannot govern what you cannot see, and in most enterprises today, agent sprawl is already real.

→ Automated policy enforcement that operates at agent speed. Rules that fire in runtime, not in post-incident review.

The window to get ahead of this is still open

Most enterprises are still in the early stages of agent deployment, primarily automating existing workflows rather than building fully autonomous systems. That is actually the right moment to invest in security infrastructure: before the complexity of multiagent systems, agent-to-agent communication, and cross-platform orchestration makes governance exponentially harder.

The organizations that treat agentic AI security as a first-class engineering concern from the start, not an afterthought bolted on after deployment, will be the ones that scale confidently. The ones that skip this step will spend the next two years in reactive mode.

Analysts tracking this space are now clear that semiautonomous deployments, where there is meaningful human supervision backed by automated controls, are the right model for enterprise agentic AI today. Not because agents are not capable. Because the governance infrastructure to support full autonomy at enterprise scale is still being built. The smart move is to build that infrastructure in parallel with your agent deployments, not after them.

Agentic AI is not slowing down. The enterprises that come out ahead will be those that treat the security and governance layer as a competitive advantage, not a compliance checkbox.